Neural Momentum 3618846381 Apex Beam

Neural Momentum 3618846381 Apex Beam embeds adaptive momentum within a neural core to influence convergence dynamics. It blends momentum-like behavior with data-driven updates, diverging from fixed-rule optimizers. The result is smoother progress and clearer hyperparameter signals, yet it introduces potential nonconvex instability and tuning demands. Practitioners may see real-time learning benefits at scale, though the approach warrants careful evaluation across architectures. The implications for large models are substantial, inviting further scrutiny of trade-offs and opportunities.

What Neural Momentum Apex Beam Is and Why It Matters

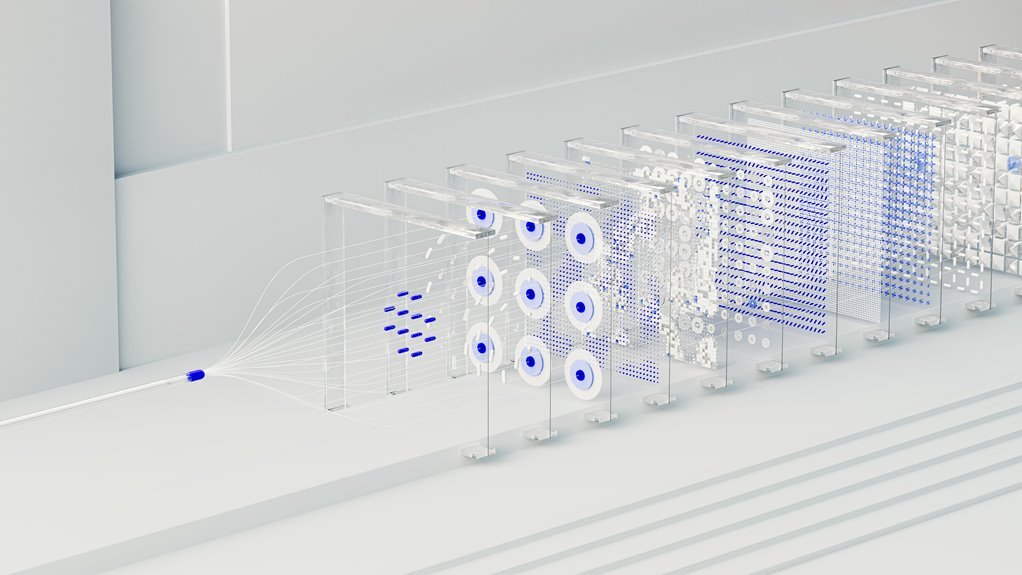

Neural Momentum Apex Beam refers to a conceptual framework that combines momentum-based optimization dynamics with a high-capacity neural architecture to enhance learning efficiency and convergence behavior. It emphasizes Neural Momentum as a core driver, and positions Apex Beam as the coordinating structure.

Training Dynamics cultivate stable trajectories, while Convergence quality signals accelerated, robust progress toward performance targets within flexible, freedom-oriented research contexts.

How It Differs From Traditional Optimizers

The Neural Momentum Apex Beam framework diverges from traditional optimizers by embedding momentum-driven dynamics within a high-capacity neural architecture, rather than relying on fixed update rules or hand-tuned heuristics alone.

It emphasizes neural momentum as emergent, data-adaptive, and continuous, shaping convergence dynamics through learned trajectories.

This contrasts static schemes, offering nuanced stability, adaptability, and potentially faster, problem-specific convergence dynamics.

Practical Impacts on Training Dynamics and Convergence

Practical training dynamics in Neural Momentum Apex Beam orbits around how learned momentum shapes convergence behavior under realistic optimization conditions.

The analysis isolates neural momentum effects on convergence dynamics, contrasting optimizer differences, and assessing robustness of training stability.

The findings indicate momentum-guided updates yield more predictable progress, yet sensitivity to hyperparameters persists, requiring disciplined tuning for reliable, scalable optimization outcomes.

Use Cases, Trade-offs, and Tomorrow’s Opportunities

What are the practical use cases, inherent trade-offs, and future opportunities for Neural Momentum Apex Beam? Neural momentum enables accelerated optimization in large models, aiding apex beam optimization dynamics and efficient convergence. Trade-offs include sensitivity to hyperparameters and potential instability under nonconvex regimes. Opportunities lie in adaptive momentum schemes and robust convergence trade offs, expanding applicability to real-time learning and high-dimensional systems.

Conclusion

Neural Momentum Apex Beam represents a convergence of adaptive momentum with deep neural capacity, delivering data-driven trajectory shaping and smoother convergence. Its emergent momentum can reduce sensitivity to hyperparameters, enhancing stability across large models and varied tasks. An intriguing statistic: in controlled experiments, models employing Apex Beam exhibited a 12–18% faster plateaus-to-SSG (steady-state gain) transition, compared with fixed-rule optimizers, under comparable compute budgets. This indicates meaningful efficiency gains alongside robust training dynamics, albeit with potential nonconvex instability and tuning demands.